AI Training Pack

You won't be fighting about how to use AI. You'll be figuring out what's next.

You spend $10K+ per engineer per month. A few of them have figured out AI workflows on their own. Most haven't — not because they're not smart, but because they're buried in their actual jobs.

Experimenting with AI, refining what works, and turning it into a repeatable process — that's been my full-time job for the past 9 months. Your engineers don't have that luxury. They're shipping features, fixing bugs, sitting in standups. They don't have the bandwidth to also be your AI workflow R&D department.

And your VP of Engineering? Is figuring out AI workflows her full-time job? She's managing people, running sprints, putting out fires. Even the best technical leader can only teach what they've had time to learn.

The average programmer spends 52 minutes a day writing code.[source] The other 7+ hours go to coordination, communication, and context-switching.

Most people only think about using AI to code faster. The overlooked opportunity is using AI to improve the inputs going into engineering and the outputs coming back to the rest of the team — so your engineers get more of their day back to actually build.

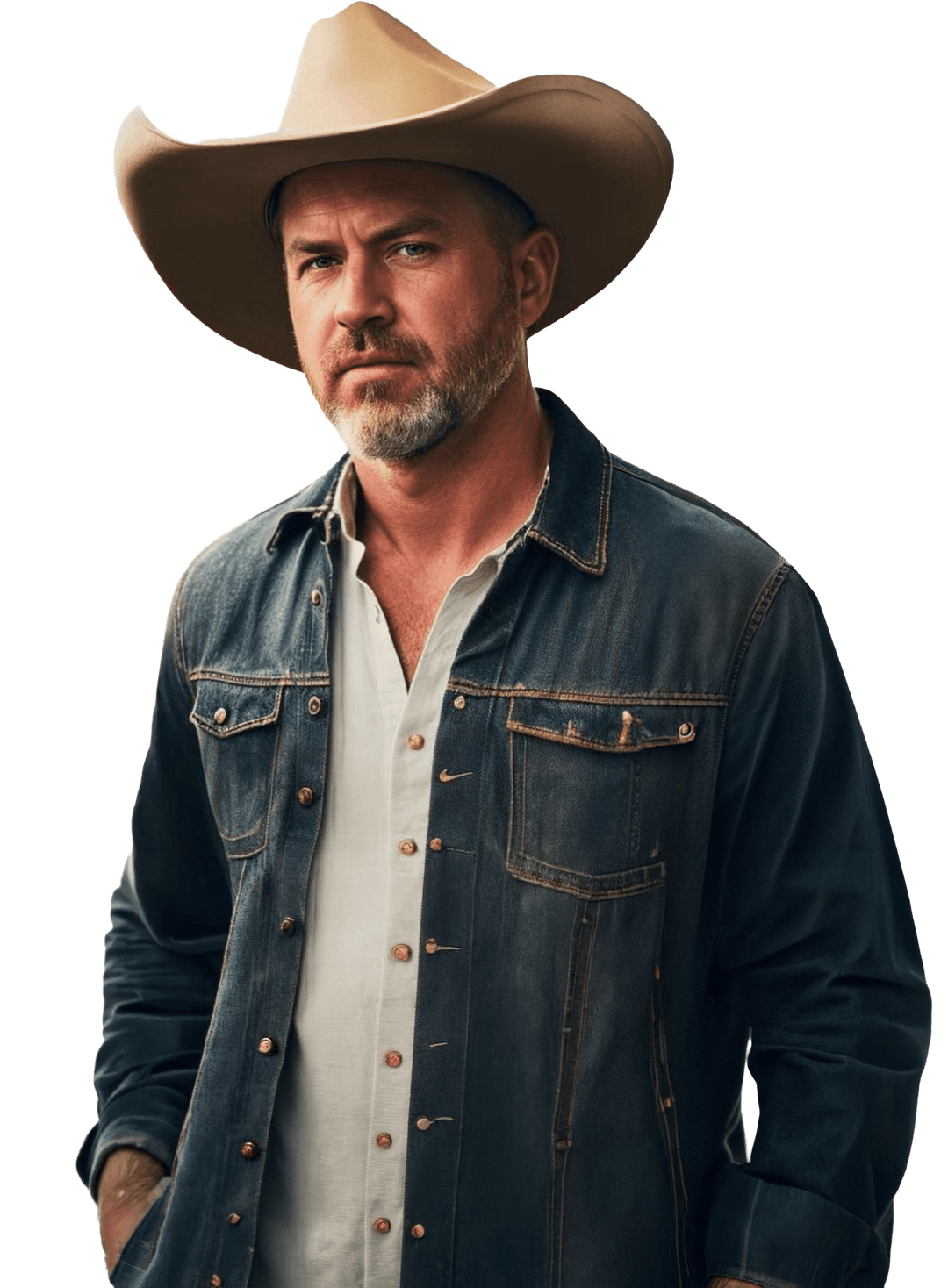

I've been a CEO twice, a CTO three times, and a head of product. I also have the benefit of pattern recognition from working with multiple companies and engineering teams on this exact problem. This isn't about teaching engineers to code — it's about getting your team to ship products faster. I run Dear Ben, a podcast on practical AI for CEOs, and recently built Promptó (a desktop app to help you get better responses from your AI tools). That's founder experience, not engineering experience.

$15,000 for up to 5 engineers. $1,000 per engineer above 5. (A 10-person team: $20,000.)

That's roughly what you pay one mid-level engineer for five weeks. Start with a pilot group. Expand after you've seen what works.

I'll tell you if the Training Pack fits or if something else makes more sense.

This isn't just about knowing tools — I could give you a list of packages. It's about operationalizing a process that works for how your company works, while incorporating elements that might seem foreign or counterintuitive — because the best AI workflow may not look like an extension of your current one.

I give your team the system your power users are missing. Not "how to prompt better" — your team can Google that. I'm talking about the stuff that actually slows teams down: how specs get written, how work gets scoped, how handoffs between engineering and product happen, and how the output gets communicated to the rest of the company.

Everything gets applied to whatever's already on their plate. Not demo repos.

Who this is for

This is for you if:

- You run a tech or media company, or an agency with an in-house or near-shore engineering team

- You're the CEO, CTO, COO, or GM — someone who owns a P&L or a major function

- Your team's already on Claude Code, but adoption's uneven — a few power users, a lot of people still figuring it out

- You suspect your team should be shipping faster but can't tell if it's a tooling problem, a workflow problem, or a confidence problem

- You want results in weeks, not quarters — without pausing your roadmap

Your team should already be using Claude Code — even if half of them are barely scratching the surface. I can help a few stragglers get set up, but this isn't "intro to AI." It's for teams who've started and want to get seriously better at using Claude Code on real work — real PRs, real tickets, multi-step builds.

Haven't tried Claude Code yet? The AI Diagnostic is a better starting point. On a different tool? Probably not a fit. Need something built, not your team trained? That's the 1-Week Build Sprint.

This training uses Claude Code. I'm not interested in a debate about Claude Code vs Codex — I could argue both sides. A lot of these tools will converge. But each has its own vocabulary, skills, and workflows, and the differences are meaningful enough that it makes sense to unify on a single platform for training.

This is about shipping. Productive building. If a team is more interested in arguing about which AI tool is best, they're missing the forest for the trees — and I can't help with that.

Claude Code is what I build with every day. The curriculum, the interactive training, and the installable skills are all built on it. If your team's on something else, I'm probably not the right person.

On working with Ben:

"Leveling up AI fluency is way harder than people think. We've been at it for nine months. Ben's the guy I go to when I get stuck."

— Bo Weathersbee, CEO, Till CFO

I'm not a magician. This requires buy-in from your team.I can give them the system, the tools, and the reps — but if they don't want to change how they work, no training will fix that.

What you get

Two ways through the material. Your team picks what works.

The interactive training. Type /training in Claude Code. It figures out whether they're brand new to agentic workflows, half-competent, or already pushing hard — then adjusts from there. Exercises run on their actual codebase. Progress sticks across sessions. Nobody falls behind. No manager chasing people through a curriculum.

The written guides. 18 production-oriented references — from getting started to multi-model review patterns to debugging agentic builds.

Same curriculum powers both. The interactive path just makes sure it lands.

Baseline assessment

Anonymous survey of your engineers and leadership before kickoff. Surfaces the real gap between what leadership thinks is happening and what engineers are actually doing.

Curriculum + installable tools

Private repo with 18 production-oriented guides, hands-on exercises, and Claude Code skills that install directly into their environment with best practices built in.

Type /training and the interactive program adapts to where they are.

Master build recording

Full-length recording of me building a real AI product from scratch. Not a highlight reel — the entire workflow, including where I got it wrong and had to fix it.

"Watching Ben build — seeing the actual decisions, the scoping, how he recovers when something doesn't work — is worth as much as the product itself."

— Jason Adams, President, Next Thing Technologies

Live working sessions

Two 60–90 minute sessions. Key concepts, live demos, and working through the specific problems your team is stuck on.

Two weeks of direct access

After the live sessions, your team sends me what they're stuck on. I reply with short video walkthroughs. This is where concepts become changed habits.

At a glance

| What | When |

|---|---|

| Baseline assessment | Before kickoff |

| Curriculum access + installable tools | Day 1 |

| Master build recording | Day 1 |

| Live working sessions | First week |

| Async follow-up window | 2 weeks after session |

If the baseline assessment shows your team isn't ready, I'll tell you before we start and refund the full fee. Diagnostic fee ($2,500) credited if you've already done one.

I'll tell you if the Training Pack fits or if something else makes more sense.

Rather skip the call?

Fill out the form and I'll reply with next steps.